扩展简介

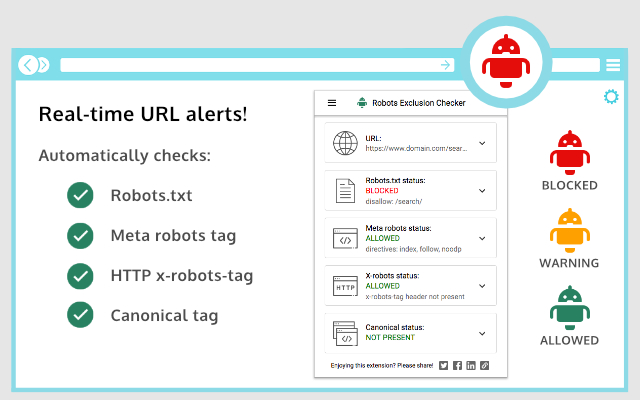

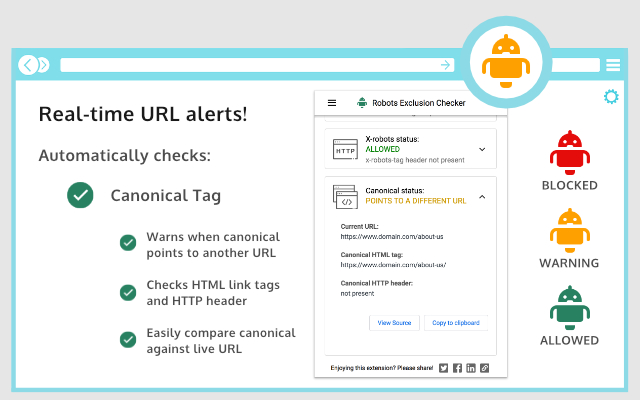

Live URL checks against robots.txt, meta robots, x-robots-tag & canonical tags. Simple Red, Amber & Green status. An SEO Extension./r/nRobots Exclusion Checker is designed to visually indicate whether any robots exclusions are preventing your page from being crawled or indexed by Search Engines.

## The extension reports on 5 elements:

1. Robots.txt

2. Meta Robots tag

3. X-robots-tag

4. Rel=Canonical

5. UGC, Sponsored and Nofollow attribute values

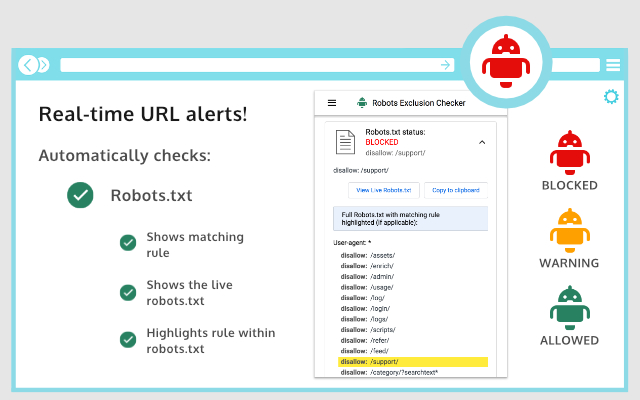

– Robots.txt

If a URL you are visiting is being affected by an "Allow” or “Disallow” within robots.txt, the extension will show you the specific rule within the extension, making it easy to copy or visit the live robots.txt. You will also be shown the full robots.txt with the specific rule highlighted (if applicable). Cool eh!

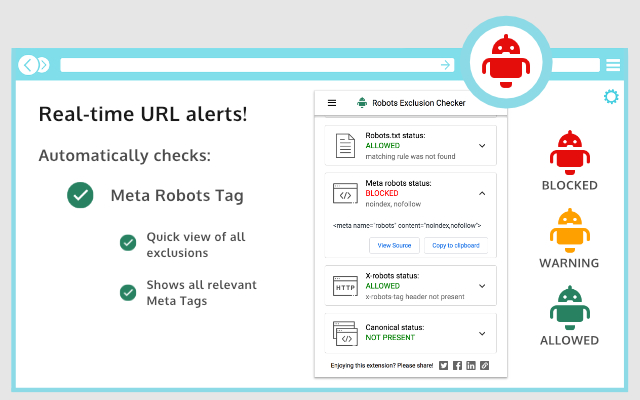

– Meta Robots Tag

Any Robots Meta tags that direct robots to “index", “noindex", “follow" or “nofollow" will flag the appropriate Red, Amber or Green icons. Directives that won’t affect Search Engine indexation, such as “nosnippet” or “noodp” will be shown but won’t be factored into the alerts. The extension makes it easy to view all directives, along with showing you any HTML meta robots tags in full that appear in the source code.

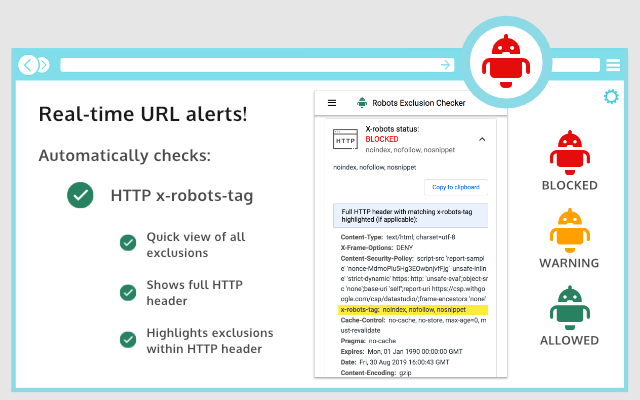

– X-robots-tag

Spotting any robots directives in the HTTP header has been a bit of a pain in the past but no longer with this extension. Any specific exclusions will be made very visible, as well as the full HTTP Header – with the specific exclusions highlighted too!

– Canonical Tags

Although the canonical tag doesn’t directly impact indexation, it can still impact how your URLs behave within SERPS (Search Engine Results Pages). If the page you are viewing is Allowed to bots but a Canonical mismatch has been detected (the current URL is different to the Canonical URL) then the extension will flag an Amber icon. Canonical information is collected on every page from within the HTML

– UGC, Sponsored and Nofollow

A new addition to the extension gives you the option to highlight any visible links that use a "nofollow", "ugc" or "sponsored" rel attribute value. You can control which links are highlighted and set your preferred colour for each. I’d you’d prefer this is disabled, you can switch off entirely.

## User-agents

Within settings, you can choose one of the following user-agents to simulate what each Search Engine has access to:

1. Googlebot

2. Googlebot news

3. Bing

4. Yahoo

## Benefits

This tool will be useful for anyone working in Search Engine Optimisation (SEO) or digital marketing, as it gives a clear visual indication if the page is being blocked by robots.txt (many existing extensions don’t flag this). Crawl or indexation issues have a direct bearing on how well your website performs in organic results, so this extension should be part of your SEO developer toolkit for Google Chrome. An alternative to some of the common robots.txt testers available online.

This extension is useful for:

– Faceted navigation review and optimisation (useful to see the robot control behind complex / stacked facets)

– Detecting crawl or indexation issues

– General SEO review and auditing within your browser

## Avoid the need for multiple SEO Extensions

Within the realm of robots and indexation, there is no better extension available. In fact, by installing Robots Exclusion Checker you will avoid having to run multiple extensions within Chrome that will slow down its functionality.

Similar plugins include:

NoFollow

https://chrome.google.com/webstore/detail/nofollow/dfogidghaigoomjdeacndafapdijmiid

Seerobots

https://chrome.google.com/webstore/detail/seerobots/hnljoiodjfgpnddiekagpbblnjedcnfp

NoIndex,NoFollow Meta Tag Checker

https://chrome.google.com/webstore/detail/noindexnofollow-meta-tag/aijcgkcgldkomeddnlpbhdelcpfamklm

CHANGELOG:

1.0.2: Fixed a bug preventing meta robots from updating after a URL update.

1.0.3: Various bug fixes, including better handling of URLs with encoded characters. Robots.txt expansion feature to allow the viewing of extra-long rules. Now JavaScript history.pushState() compatible.

1.0.4: Various upgrades. Canonical tag detection added (HTML and HTTP Header) with Amber icon alerts. Robots.txt is now shown in full, with the appropriate rule highlighted. X-robots-tag now highlighted within full HTTP header information. Various UX improvements, such as "Copy to Clipboard” and “View Source” links. Social share icons added.

1.0.5: Forces a background HTTP header call when the extension detects a URL change but no new HTTP header info – mainly for sites heavily dependant on JavaScript.

1.0.6: Fixed an issue with the hash part of the URL when doing a canonical check.

1.0.7: Forces a background body response call in addition to HTTP headers, to ensure a non-cached view of the URL for JavaScript heavy sites.

1.0.8: Fixed an error that occurred when multiple references to the same user-agent were detected within robots.txt file.

1.0.9: Fixed an issue with the canonical mismatch alert.

1.1.0: Various UI updates, including a JavaScript alert when the extension detects a URL change with no new HTTP request.

1.1.1: Added additional logic Meta robots user-agent rule conflicts.

1.1.2: Added a German language UI.

1.1.3: Added UGC, Sponsored and Nofollow link highlighting.

1.1.4: Switched off nofollow link highlighting by default on new installs and fixed a bug related to HTTP header canonical mismatches.

1.1.5: Bug fixes to improve robots.txt parser.

1.1.6: Extension now flags 404 errors in Red.

1.1.7: Not sending cookies when making a background request to fetch a page that was navigated to with pushstate.

1.1.8: Improvements to the handling of relative vs absolute canonical URLs and unencoded URL messaging.

Found a bug or want to make a suggestion? Please email extensions @ samgipson.com

发表评论